China's Z.ai (formerly Zhipu AI) has officially shaken up the AI coding space with GLM-5.1. Delivering 94.6% of Claude Opus 4.6's performance starting at just $10/month (with up to 30% off yearly), this model marks one of the most significant milestones in open-source AI history. With its massive 744B parameter MoE architecture, seamless integration with 20+ IDEs like Cursor and Cline, and free built-in MCP tools, we dive deep into how this achievement was made possible and explore real developer reactions.

The Arrival of GLM-5.1: Changing the Open Source AI Landscape 🎯

When Z.ai's Global Lead Li Zixuan announced the arrival of the new model with a simple message: "Don't panic. GLM-5.1 will be open source," it sent shockwaves through the AI developer community. GLM-5.1 wasn't just another update—it marked the dawn of a new era where open source models stand shoulder-to-shoulder with closed-source enterprise champions.

Key Points

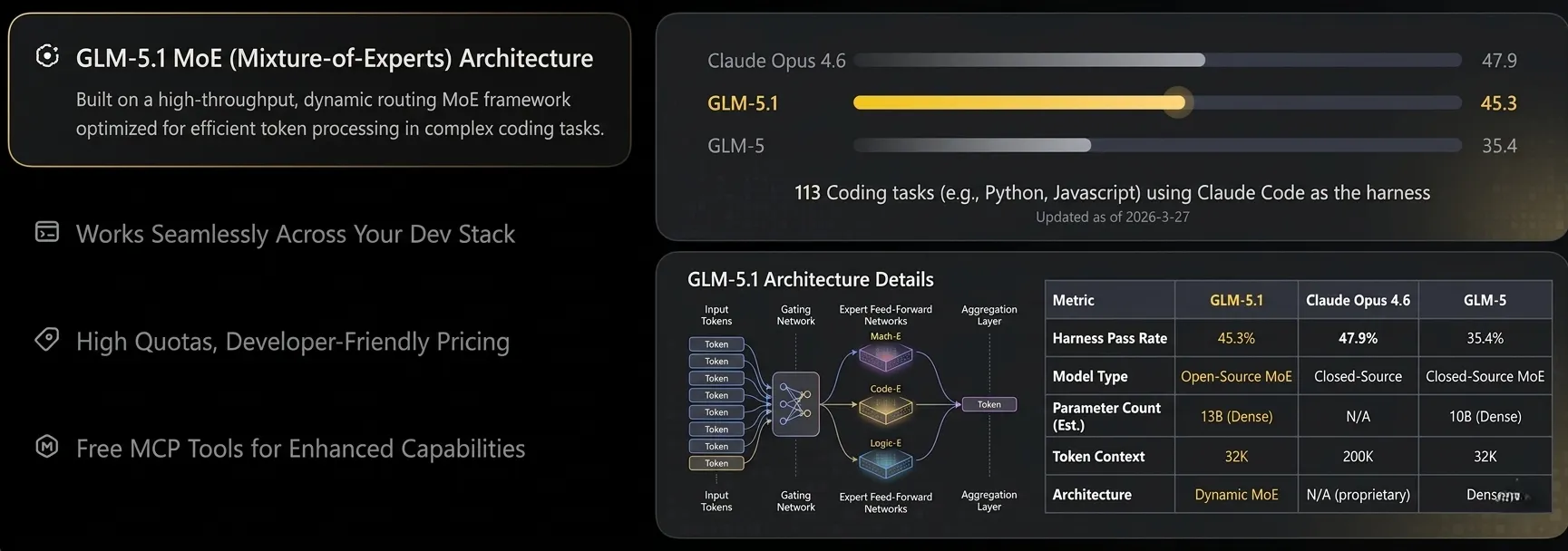

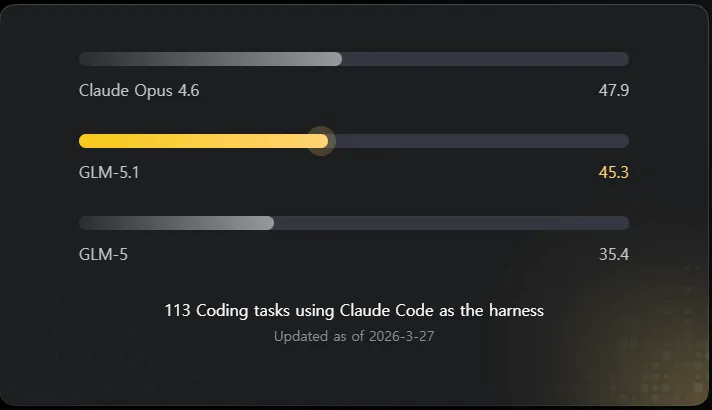

GLM-5.1 achieves 94.6% of Claude Opus 4.6's performance. Scoring 45.3 in a rigorous 113-task coding evaluation, it demonstrates top-tier coding, reasoning, and agentic capabilities among open source models.

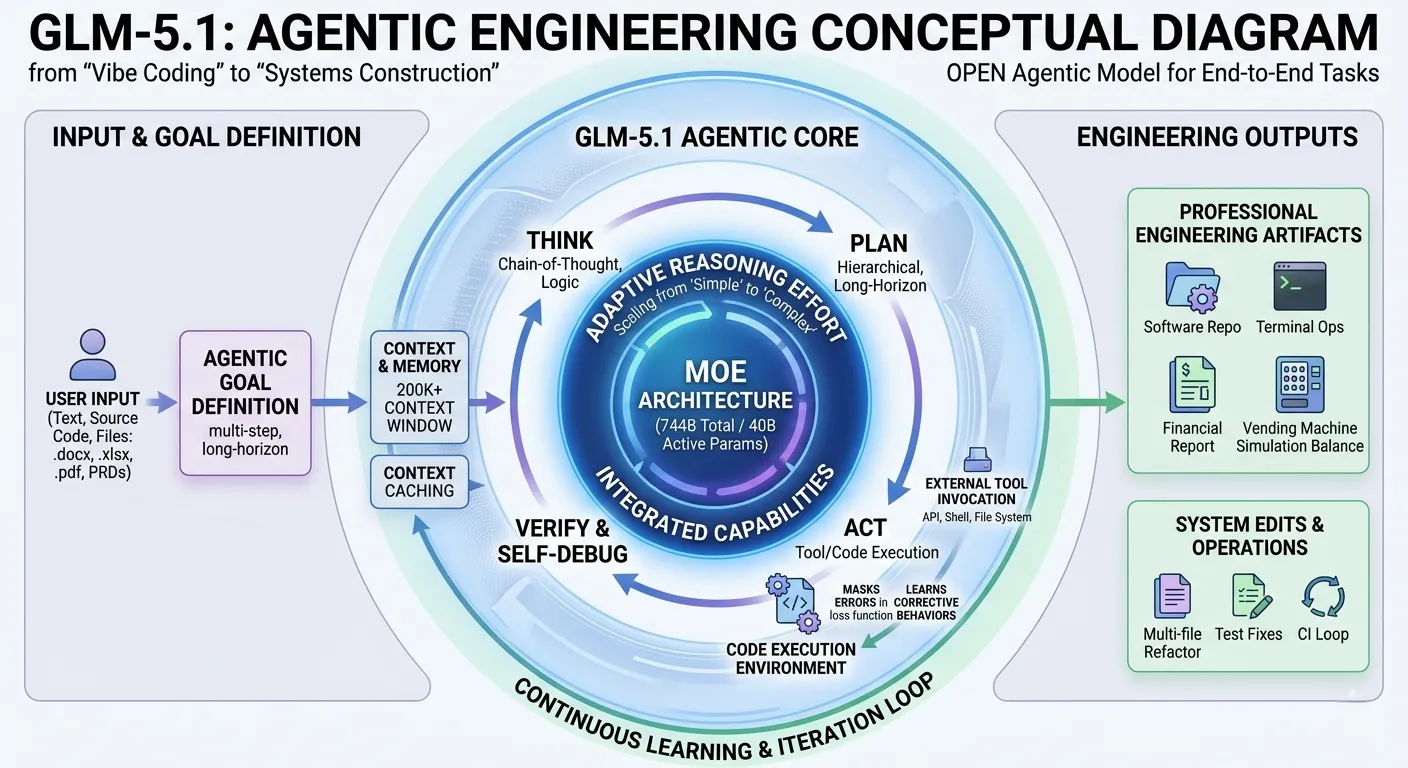

The core innovation of GLM-5.1 is its paradigm shift toward "Agentic Engineering." Moving beyond traditional "Vibe Coding," it's designed for complex systems engineering and long-horizon tasks—meaning it doesn't just generate a snippet, but reliably executes plans and to-dos to build entire applications.

Architecture Deep Dive: The Secret of 744B MoE 🏗️

Behind GLM-5.1's impressive capabilities lies an innovative architectural design. With 744B total parameters in a Mixture of Experts (MoE) structure, it activates only 40-44B parameters per inference—perfectly balancing efficiency with raw intellectual horsepower.

256 Expert Networks

A sparse structure activating only the top-8 out of 256 experts, maintaining ~5.9% sparsity for hyper-efficient inference.

200K Context Window

Supporting 200K input tokens and up to 128K output, enabling whole-codebase analysis and complex refactoring.

DeepSeek Sparse Attention

Integrated DSA mechanism dramatically reduces computational memory costs even when tracking long contexts.

Slime Async RL

The novel "Slime" asynchronous reinforcement learning infrastructure maximizes large-scale RL training efficiency.

# GLM-5.1 Core Specifications

MODEL_CONFIG = {

"total_parameters": "744B", # Total parameters

"active_parameters": "40-44B", # Activated during inference

"experts": 256, # Total number of experts

"top_k_experts": 8, # Activated experts

"sparsity": "5.9%", # Sparsity ratio

"context_window": "200K", # Input context limit

"max_output": "128K", # Maximum output limit

"hardware": "Huawei Ascend", # Independent hardware stack

"license": "MIT" # Open source license

}Benchmark Comparison: How Close to Claude Opus? 📊

In an evaluation based on 113 rigorous coding tasks using Claude Code as the harness, GLM-5.1 scored 45.3—demonstrating performance practically indistinguishable from Claude Opus 4.6 (47.9) for day-to-day coding needs.

| Model | Coding Score | Gap to Opus | Monthly Cost |

|---|---|---|---|

| Claude Opus 4.6 | 47.9 | — | $100-200 |

| GLM-5.1 🏆 | 45.3 | -2.6 (94.6%) | $10-80 |

| GLM-5 | 35.4 | -12.5 (73.9%) | Included |

The significance of this result is further highlighted by the fairness of the testing environment. Claude Code is a harness optimized for Anthropic's models—yet GLM-5.1 achieved 94.6% performance in this "away game."

Pricing Revolution: Developer-Friendly Plans & Free MCP 💰

GLM Coding Plans offer unbeatable value. Starting from only $10/month, you enjoy up to 3× the usage of a comparable Claude plan. Subscribers can also opt for quarterly (-10%) or yearly (-30%) discounts.

Lite Plan

$10/mo

- ✔️ 3× Claude Pro plan usage

- ✔️ For lightweight workloads

- ✔️ 20+ coding tools support

Pro Plan

$30/mo

- ✔️ 5× Lite plan usage

- ✔️ 40%–60% faster generation

- ✔️ Priority access to new models

- ✔️ Free MCP Tools Included

Max Plan

$80/mo

- ✔️ 4× Pro plan usage

- ✔️ Guaranteed peak-hour speed

- ✔️ High-volume workloads

- ✔️ First access to new features

* Note: Save up to 30% with an annual subscription. Z.ai also offers a "Refer & Earn" program granting up to 20% bonus credits for inviting friends with no limits.

Practical Integration: Working with Cursor, Cline & More 🛠️

GLM-5.1 works seamlessly across your entire dev stack. It is compatible with 20+ popular AI coding tools, offering massive flexibility. Supported tools include: Claude Code, Cursor, Roo Code, Kilo Code, Cline, OpenCode, Crush, and Goose.

Free Model Context Protocol (MCP) Tools

A massive differentiator for the Pro and Max plans is the inclusion of free built-in MCP tools that give the AI real-time awareness and vision.

Setting Up Claude Code

{

"model": "GLM-5.1",

"api_provider": "z.ai",

"api_key": "your_zai_api_key",

"max_tokens": 128000,

"temperature": 0.7

}Community Reactions: Real User Reviews 💬

The developer community is rapidly switching over to GLM-5.1. Here are some verified experiences from actual users of the Z.ai Coding Plan:

"I tried GLM Coding Pro out of curiosity, and the results were surprisingly good: it not only quickly generated preprocessing code without errors, but also helped optimize the feature selection logic and even provided multiple hyperparameter tuning solutions. What originally took a week was completed in just two days."

Louis Lau"GLM shocked me with how good it is tbh. It just seems to do what I want more reliably than other models, less reworking of prompts needed."

DivDev"Since getting the GLM Coding-plan, I've been able to operate more freely and focus more on the core development without worrying about resource shortages that could hinder progress. Additionally, GLM's overall workflow from planning to project execution performs excellently, allowing me to confidently entrust it with the task."

starscat.tsxFuture Outlook: Open Source Promise and Ecosystem Expansion 🔮

Z.ai has officially committed to open sourcing GLM-5.1—a crucial decision accelerating AI development democratization. GLM-5 is already available on Hugging Face and ModelScope under the MIT license, and the 5.1 weights are highly anticipated.

2026 AI Coding Tools Selection Guide

Best Agentic Value & High Quotas: GLM-5.1 (Z.ai)

Conversational & Teaching: Claude 4.6

Raw Mathematical Reasoning: DeepSeek-V3.2

Enterprise Constraints: GPT-5.1

Conclusion: Who Should Be Your Next Coding Partner? 🎯

The launch of GLM-5.1 marks a definitive turning point in open source AI history. Delivering 94.6% of Claude Opus 4.6's performance starting at just $10/month, and integrating effortlessly with tools like Cursor, Cline, and Claude Code, this model is practically a no-brainer for serious developers.

- ✅ Unbeatable Value: Starts at $10/mo, providing up to 3×-5× the usage of competitors.

- ✅ Top-Tier Model: 45.3 Coding Score (Claude Opus is 47.9).

- ✅ Works Anywhere: Native integration with 20+ coding IDEs.

- ✅ Free MCP Tools: Vision, Web Search, and Web Reader built right into Pro/Max plans.

🚀 Code with AI. Crush a week's work in hours.

Experience GLM-5.1 today at z.ai. The future of coding is open.