On March 31, 2026, an event shook the AI industry. The complete source code of Claude Code, one of the world's premier AI coding agents, was accidentally released through an npm package. 512,000 lines of TypeScript code, 44 hidden feature flags, and unreleased innovative features were suddenly in the hands of developers worldwide overnight. This incident raises fundamental questions about the future of AI development, going far beyond a simple security mistake.

The Beginning: The 59.8MB Source Map Shock 🚨

Everything began with a single tweet at 4:23 AM Eastern Time on March 31, 2026, from a security researcher named Chaofan Shou. He discovered a 59.8MB JavaScript source map file (cli.js.map) in Claude Code npm package version 2.1.88.

⚡ Key Fact

Source maps are files developers use to debug minified code, acting like a "decoder ring" that can perfectly reconstruct original TypeScript code. These files should never be included in production builds.

The problem started with Bun, a build tool that generates source maps by default. Anthropic's developers either forgot to disable this setting in production builds or missed adding one line to their .npmignore file. This simple mistake resulted in exposing 512,000 lines of code across 1,900 files.

Anthropic immediately issued an official statement: "This morning, some internal source code was included in a Claude Code release. No sensitive customer data or credentials were exposed, and this was a human error in release packaging, not a security breach." But it was too late. The internet never forgets, and the code had already been replicated across thousands of GitHub mirrors.

"This is not just a chatbot wrapper. Claude Code is closer to an operating system for software work. Permissions, memory layers, background jobs, IDE bridges, MCP pipelines, multi-agent orchestration—all of it is stacked around the model." – eWeek Analysis

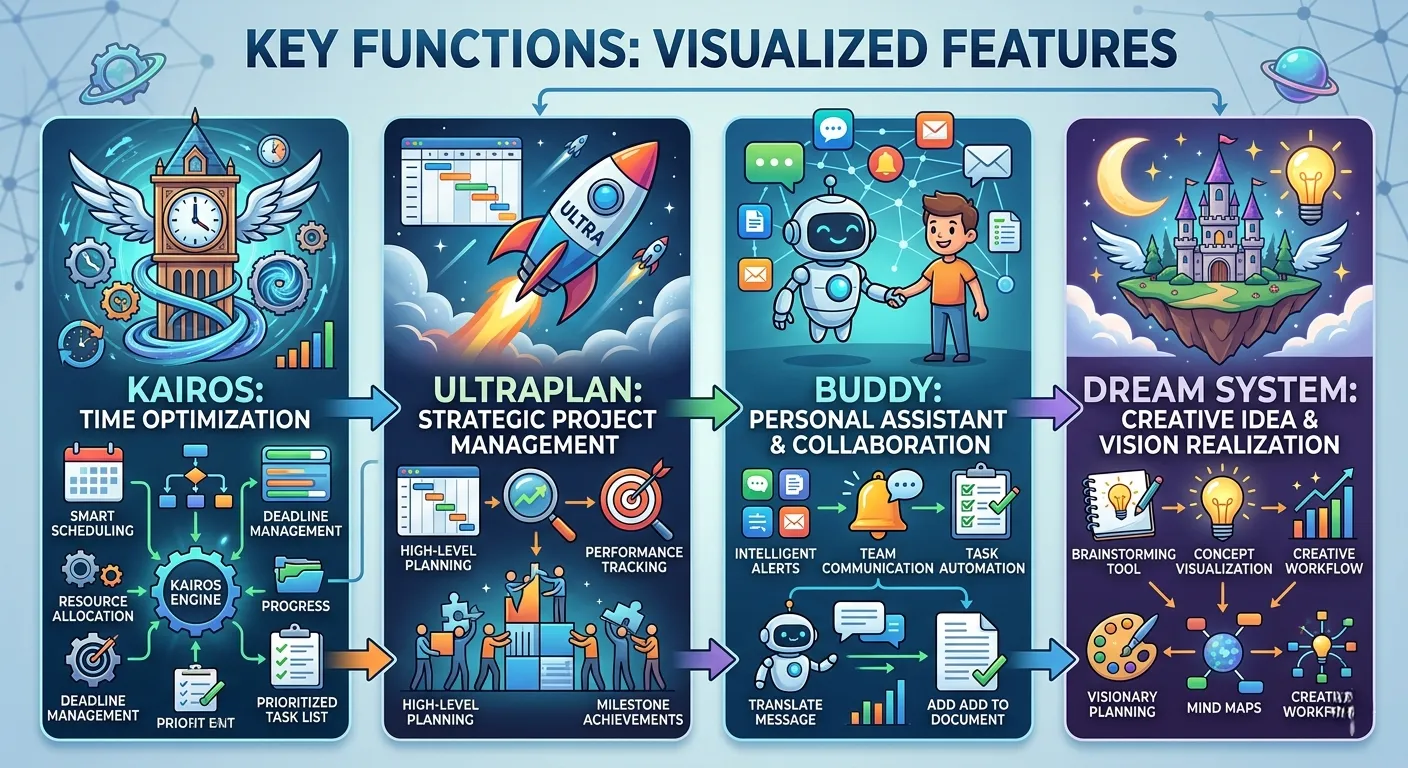

Revealed Future: KAIROS, ULTRAPLAN, and Buddy 🚀

The most fascinating discoveries from the leaked code were the 44 hidden feature flags. These represent innovative features Anthropic hasn't publicly announced yet, serving as windows into the future of AI coding tools.

🔮 KAIROS: The AI That Knows Without Asking

Current AI coding tools are reactive. Users input, AI responds. KAIROS completely flips this paradigm. It's a persistent background daemon that monitors your workspace, writes daily observation logs, and acts when it finds something noteworthy—without you asking.

With a 15-second blocking budget built in, it won't disrupt your flow with slow operations. It receives periodic "tick" prompts to decide whether to act or stay quiet. This isn't an assistant waiting for instructions; it's a colleague who pays attention to your project and flags issues proactively.

🧠 ULTRAPLAN: 30 Minutes of Deep Thought

Currently, asking Claude for complex strategic planning requires step-by-step guidance. ULTRAPLAN automates this entirely. It offloads complex planning to a remote cloud container running Opus 4.6, thinks for up to 30 minutes, then presents results through a browser-based interface for approval.

For enterprises handling complex project planning, litigation strategy, or deal structuring, 30 minutes of autonomous thinking followed by structured output represents a meaningful leap forward.

🎮 Buddy: A Tamagotchi Inside Your AI Coding Tool

One of the most surprising discoveries was a system called "Buddy." This is a virtual companion living inside your terminal—a complete Tamagotchi-style AI pet with 18 species, rarity tiers, and stats including debugging, patience, chaos, and wisdom.

Originally planned for a surprise rollout from April 1-7, with a full launch in May, this discovery shows Claude Code is attempting emotional connection with users beyond mere productivity tools.

🌙 Dream System: AI That Dreams

The most subtle yet important feature is the Dream System. This is a background memory integration engine where Claude "dreams" while you're away. It merges observations, removes contradictions, and converts hazy insights into reliable facts.

For anyone frustrated by context window limitations or disappointed when AI "forgets" mid-project, this is Anthropic's answer.

The Korean Developer's Counterattack: instructkr/claw-code Phenomenon 🇰🇷

The most dramatic development in this incident came from Sigrid Jin, a Korean developer profiled by the Wall Street Journal as having consumed 25 billion Claude Code tokens in one year. He received the news at 4 AM and immediately sprang into action.

04:00 AM - Incident Awareness

Sigrid Jin learns about the source code leak

04:30 AM - Strategy Formulation

Plans clean-room Python reimplementation using oh-my-codex (OmX)

06:00 AM - Release

Publishes instructkr/claw-code repository on GitHub

08:00 AM - Historic Record

First time in GitHub history: 50,000 stars in 2 hours

The fact that his girlfriend is a copyright lawyer adds an ironic twist to this story. Rumor has it she woke up and pleaded "take it down." The team's response: "What if we just have the agent rewrite the whole thing from scratch?"

– Gergely Orosz, The Pragmatic Engineer

The clean-room approach created a new legal puzzle. If Anthropic claims AI-generated transformative rewriting infringes copyright, they would weaken their own defense logic in training data copyright cases. Their argument—that AI-generated output constitutes fair use from copyrighted input—would apply exactly the same logic here.

Currently, claw-code is being completely rewritten in Rust. Using the clean-room method, the architecture is being studied while translating TypeScript line by line without direct translation.

Second Leak in 5 Days: The Rise of Claude Mythos 🦄

This source code leak was the second in five days. On March 26, a separate misconfiguration in Anthropic's content management system exposed nearly 3,000 internal files. The biggest revelation from this incident, reported by Fortune, was a new model called Claude Mythos.

Internally codenamed "Capybara," this model represents a tier above Opus. According to leaked draft blog posts, Capybara received dramatically high scores in coding, reasoning, and cybersecurity—making it the most powerful model Anthropic has ever built.

| Model Tier | Characteristics | Expected Pricing |

|---|---|---|

| Haiku | Smallest, cheapest, fastest | Low |

| Sonnet | Faster and cheaper, slightly less capable | Medium |

| Opus | Current largest and most capable model | High |

| Capybara/Mythos | Bigger and more powerful than Opus | Premium |

Roy Paz from LayerX Security suggested this model will likely launch in both "fast" and "slow" versions based on its larger context window. Anthropic has confirmed this model as a "step change" and "the most capable thing we've ever built."

Security Irony: The Paradox of Undercover Mode 🕵️

One of the most ironic discoveries from the leaked code was a system called "Undercover Mode." This was specifically built to prevent Anthropic's internal information from leaking to open-source repositories.

The system prompt injected into Claude's context literally says: "Do not blow your cover." They built an entire subsystem to ensure internal codenames or AI mentions don't appear in commit messages.

And that subsystem itself leaked. Along with everything else, in a file anyone could download. This hasn't been a good week for Anthropic's entire brand identity as "the careful ones."

Key Takeaway

The real lesson from this incident is the difference between technical security and operational security. Even the most sophisticated internal security system can collapse from a single line of build configuration mistake. Software supply chain security is no longer optional—it's a matter of survival.

Community Reactions: Reddit and Hacker News Analysis 💬

Following the incident, thousands of comments flooded Reddit's r/LocalLLaMA, r/Anthropic, and Hacker News. Developer reactions largely fell into three categories.

🔬 Technical Analysts

"This is not just a chatbot wrapper. Claude Code is closer to an operating system for software work. Permissions, memory layers, background jobs, IDE bridges, MCP pipelines, multi-agent orchestration—all of it is stacked around the model."

⚖️ Legal Concerned

"Anthropic is issuing DMCA takedowns, but the code has spread too widely to control. Mirrors are everywhere. @gitlawb mirrored the original code on decentralized git platform Gitlawb with a simple message: 'This will never be deleted.'"

🚀 Opportunists

"This gives Anthropic's competitors an opportunity to build a bridge. They can reverse engineer how Claude Code's agent harness works and use that knowledge to improve their own products."

Legal Issues: DMCA and Clean Room Reimplementation ⚖️

This incident raises questions about new territories in copyright law. First, accidental publication does not grant an open-source license. Anthropic's copyright remains protected regardless of how the code became public. Unauthorized downloading, distribution, or use creates legal exposure.

However, the clean-room reimplementation strategy complicates matters. Developers studying how a system works and building their own version from scratch in a different language has been legally defensible in software for decades. The question is whether "having an AI agent study the architecture and rewrite it overnight in Rust" is considered the same thing.

Another complex layer is that Anthropic's CEO hinted that significant portions of Claude Code were written by Claude itself. If the code is AI-generated, copyright claims become even more complicated, despite courts generally maintaining copyright for AI-assisted works.

A New Paradigm for AI Development 🌅

This incident shows that the AI coding competition is no longer about who has the smartest model. It's about who has the best harness. OpenAI is intentionally open-sourcing parts of Codex CLI. Anthropic accidentally revealed a similar product architecture.

The difference isn't just PR. It tells you what each company thinks constitutes true competitive advantage. Open-sourcing the harness means betting on advantage in models, product velocity, ecosystem, and distribution. Keeping the harness private implies the orchestration layer itself is part of the crown jewels.

🎯 4 Action Items for Enterprise Leaders

- Confirm if Claude is a long-term platform: This leak answers that question. The engineering depth behind Claude Code, the multi-agent architecture, and proactive assistance features show this is infrastructure, not a chatbot wrapper.

- Watch release timelines: Anthropic may now accelerate features like KAIROS. The product you evaluate today may look very different by Q3.

- Re-evaluate operational security: Two leaks in five days is a real data point for vendor risk assessment.

- Prepare for "always-on AI": KAIROS isn't here yet but the direction is clear. Enterprises that identify repetitive tasks where AI can handle active monitoring, flagging, and follow-up will be prepared.

The base model wasn't leaked. None of Claude's training data, weights, or core intelligence was exposed. This was the CLI wrapper (the text-based interface developers use to interact with the tool), not the engine. Data processed through Claude's API remains secure, and Claude's security as a tool for enterprises hasn't changed.

What has changed is visibility. We now have a much clearer product roadmap than Anthropic intended, and we know it's truly ambitious. KAIROS, ULTRAPLAN, and Coordinator Mode aren't concepts from slide decks. They're built into the codebase behind feature flags. The gap between "announced product" and "shipped product" is much smaller than anyone outside Anthropic knew.