On April 23, 2026, Xiaomi sent shockwaves through the AI industry. With the release of the MiMo-V2.5 series, a new era for open-source AI models has begun. This ultra-large-scale Sparse MoE model with 310B parameters (15B active) is stepping forward to compete head-to-head with commercial frontier models like GPT-5.4, Claude Opus 4.6, and Gemini 3 Pro.

What's particularly noteworthy are three innovations: 1 million token context window, native multimodal processing, and API costs 50% lower than frontier models. This demonstrates that AI development is moving in a direction that delivers practical value to developers and enterprises, beyond just technical achievements.

1️⃣ MiMo-V2.5 Core Specifications & Architecture

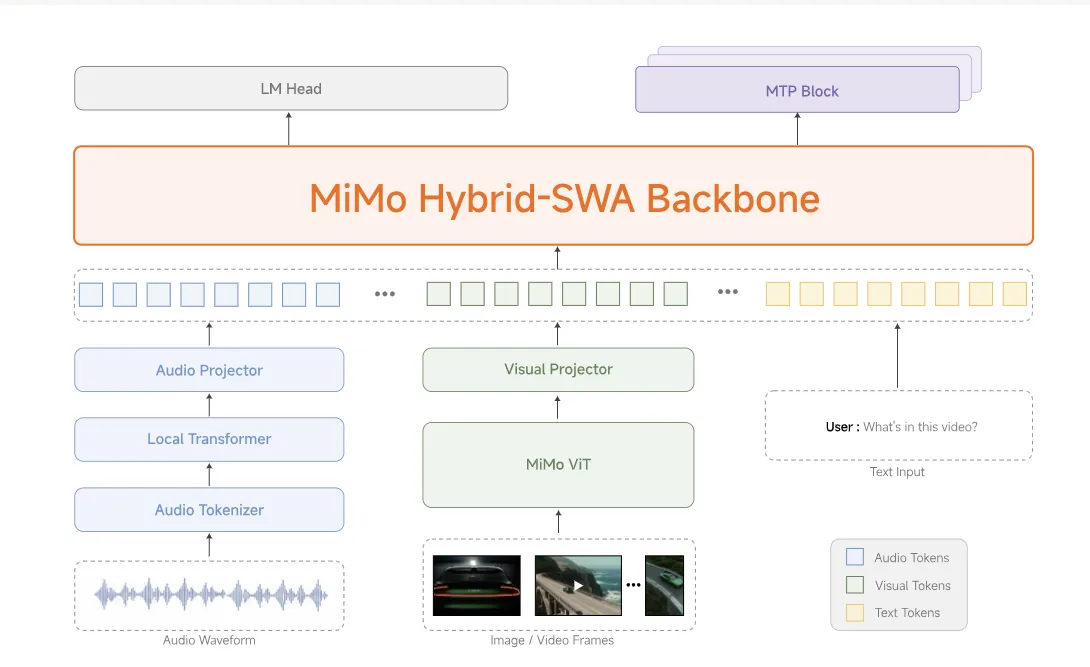

MiMo-V2.5 is not just a language model—it's an all-in-one multimodal AI that natively integrates vision, audio, and text. Trained by Xiaomi's MiMo team on 48 trillion tokens, this model went through a sophisticated 5-stage training pipeline.

🔧 Technical Specifications

| Total Parameters | 310B (310 Billion) |

| Active Parameters | 15B (15 Billion) - Sparse MoE |

| Training Data | 48T (48 Trillion) Tokens |

| Context Window | Up to 1,000,000 Tokens |

| Architecture | Hybrid Sliding-Window Attention |

| Precision | FP8 (E4M3) Mixed Precision |

🏗️ 5-Stage Training Pipeline

Stage 1: Text Pre-training

Building the LLM backbone using diverse corpora. Inherits the hybrid sliding-window attention architecture from MiMo-V2-Flash for both efficiency and performance.

Stage 2: Projector Warm-up

Aligning audio and visual encoders with the language model. Xiaomi connects its proprietary pre-trained encoders through lightweight projectors.

Stage 3: Multimodal Large-Scale Pre-training

Extended training with high-quality cross-modal data. Learning correlations between images, audio, video, and text to cultivate native multimodal understanding.

Stage 4: Supervised Fine-tuning & Agent Post-training

Gradually expanding context window from 32K → 256K → 1M while strengthening long-context understanding and AI Agent capabilities.

Stage 5: RL & MOPD

Final refinement of perception, reasoning, and Agent execution capabilities through Reinforcement Learning and Multi-Objective Policy Distillation (MOPD).

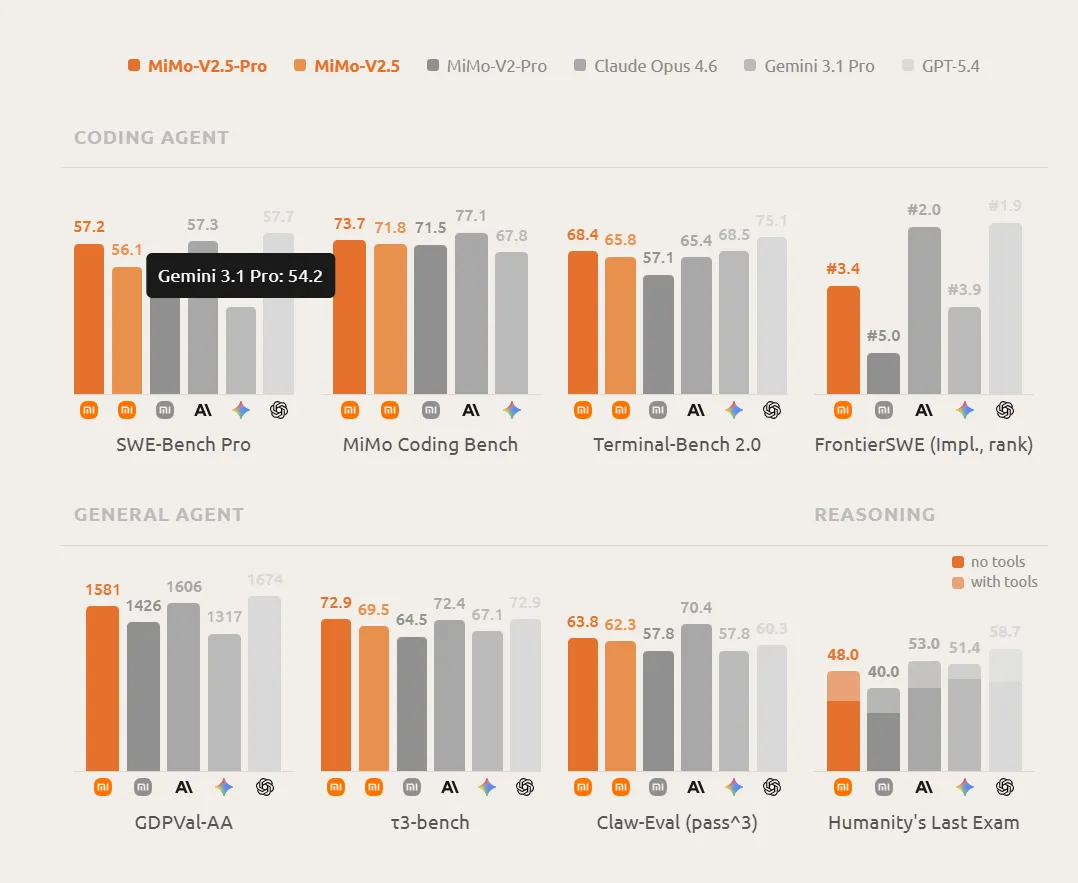

2️⃣ Benchmark Performance: Comparison with GPT-5 & Claude

The most impressive aspect of MiMo-V2.5 is that it delivers performance equal to or exceeding frontier commercial models while costing only half as much. Let's examine the key benchmark results.

📊 AI Agent Performance Benchmarks

| Benchmark | MiMo-V2.5 | MiMo-V2-Pro | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|---|---|

| Claw-Eval (General) | 62.3 | 57.8 | 65.4 | 60.3 | 57.8 |

| Coding Agent | 71.8 | 71.5 | 77.1 | - | 67.8 |

| MiMo Coding Bench | 62.3 | 57.8 | 70.8 | - | 57.8 |

| Terminal-Bench 2.0 | 56.1 | 55.0 | 57.3 | 57.7 | 54.2 |

| GDPVal-AA (Elo) | 1578-1581 | 1426 | 1600+ | - | - |

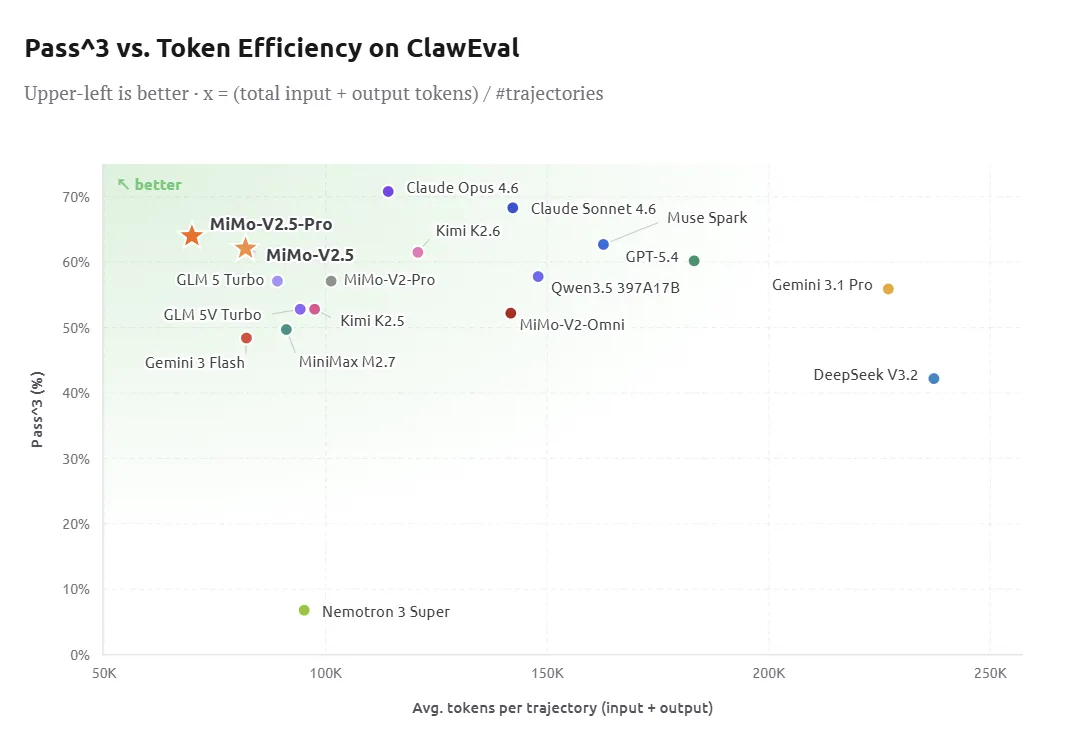

Claw-Eval is a benchmark that evaluates everyday AI Agent tasks. MiMo-V2.5 scored 62.3, placing it on the Pareto frontier of performance and efficiency. This surpasses MiMo-V2-Pro and puts it in close competition with Claude Opus 4.6 (65.4).

💻 Coding Capability Tests

For developers, coding performance is crucial, and MiMo-V2.5 delivers impressive results. Particularly noteworthy is that it achieves performance comparable to MiMo-V2.5-Pro on everyday coding tasks while costing only half as much.

3️⃣ Multimodal Capabilities: Seeing, Hearing, Understanding

MiMo-V2.5's greatest strength is its native multimodal processing. A single model understands and reasons over images, audio, video, and text simultaneously—without separate models or pipelines. This surpasses even MiMo-V2-Omni.

👁️ Visual Understanding

| Benchmark | MiMo-V2.5 | MiMo-V2-Omni | Gemini 3 Pro | GPT-5.4 |

|---|---|---|---|---|

| Image Understanding | 81.0 | 80.1 | 81.4 | - |

| CharXiv RQ (Chart Analysis) | 81.0 | - | - | 81.2 |

| MMMU-Pro | 77.9 | 76.8 | - | - |

| HR-Bench (4K) | 87.2 | 86.7 | - | 89.0 |

| OmniDocBench | 88.5 | 83.3 | 86.4 | - |

The score of 81.0 on CharXiv RQ is particularly noteworthy. This benchmark measures the ability to understand and interpret complex academic charts, graphs, and diagrams—MiMo-V2.5 performs nearly on par with GPT-5.4 (81.2).

🎥 Video Understanding

In video understanding, MiMo-V2.5 demonstrates performance comparable to Gemini 3 Pro, placing it among the best open-source models available.

Video-MME Benchmark

- MiMo-V2.5: 87.7 points (Nearly identical to Gemini 3 Pro: 88.4)

- MiMo-V2-Omni: 85.3 points

- Features: Stable performance on long-form video, cross-frame reasoning, and minute-level scene recall

🎵 Audio & Speech Processing

The MiMo-V2.5 series includes V2.5-ASR and V2.5-TTS Series.

MiMo-V2.5-ASR

Supports Chinese-English bilingual speech recognition with high accuracy in real-world environments including various accents, dialects, and background noise. Capable of understanding continuous audio over 10 hours.

MiMo-V2.5-TTS

Significantly improved speech synthesis naturalness with support for multiple languages/dialects/voice tones. Capable of emotional expression and intonation control, generating speech nearly indistinguishable from human voice.

4️⃣ AI Agent Performance: Real-World Automation Tests

MiMo-V2.5-Pro has demonstrated remarkable capabilities on complex, long-horizon tasks. Xiaomi has validated the model's real-world performance through practical case studies.

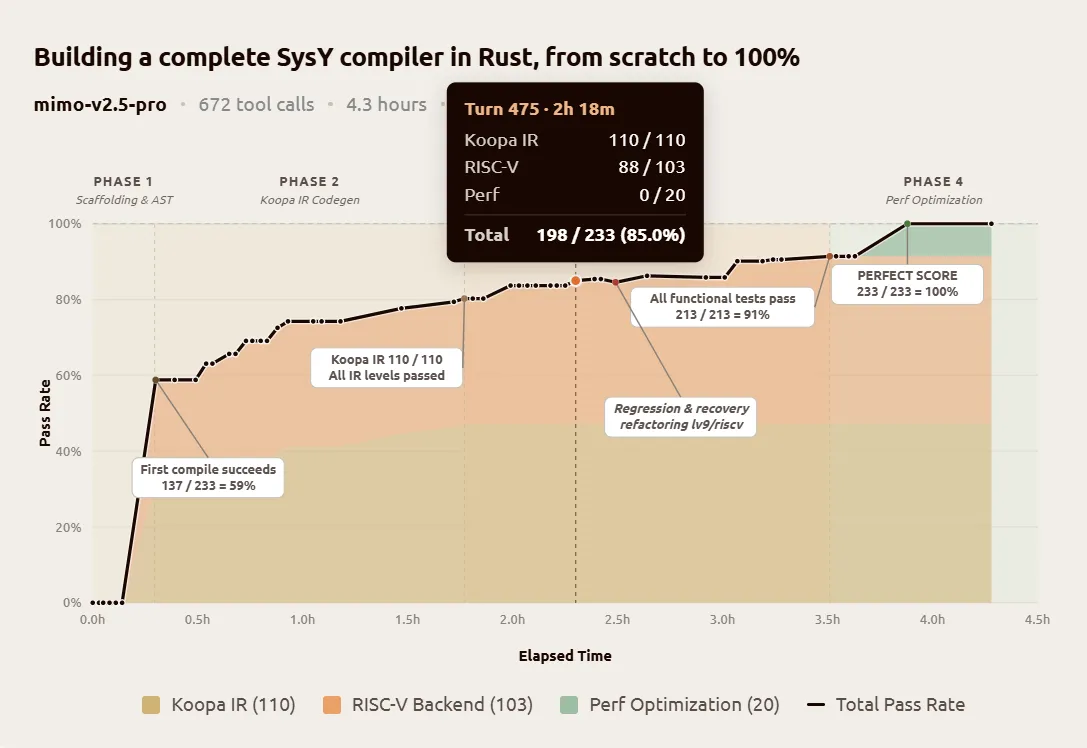

🎓 Case 1: Peking University Compiler Project

Building a Complete SysY Compiler in Rust

Task: Peking University Compiler Principles course project. Includes lexical analyzer, parser, AST, Koopa IR code generation, RISC-V assembly backend, and performance optimization.

- Time for Peking University undergraduates: Several weeks

- Time for MiMo-V2.5-Pro: 4.3 hours

- Tool calls: 672 times

- Final score: 233/233 (Perfect)

- Cold start pass rate: 137/233 (59%)

Rather than random trial-and-error, the model systematically built the entire compiler pipeline. It achieved perfect scores on Koopa IR (110/110), RISC-V backend (103/103), and performance optimization (20/20).

🎬 Case 2: Video Editor Web App Development

"Build a video editor web application"

Prompt: Just the simple instruction "Build a video editor web application"

- Implemented features: Multi-track timeline, clip trimming, cross-fades, audio mixing, export

- Code volume: 8,192 lines

- Tool calls: 1,868 times

- Time taken: 11.5 hours (autonomous work)

📈 Token Efficiency

The MiMo-V2.5 series achieves the same performance with fewer tokens. This translates directly to API cost savings.

| Comparison | Token Savings | ClawEval Score |

|---|---|---|

| MiMo-V2.5-Pro vs Kimi K2.6 | 42% savings | Equivalent |

| MiMo-V2.5 vs Muse Spark | 50% savings | Equivalent |

5️⃣ Pricing Strategy & Value Analysis

MiMo-V2.5's greatest appeal is its unbeatable value proposition. You can access frontier-level performance at 1/5 the cost.

💰 Token Plan Pricing

MiMo-V2.5

- Credits: 1x (1 token = 1 credit)

- Context: Up to 1M tokens

- Multimodal support

- Optimized for general Agent tasks

MiMo-V2.5-Pro

- Credits: 2x (1 token = 2 credits)

- Context: Up to 1M tokens

- Optimized for complex long-horizon tasks

- GDPVal-AA 1578 points

🌙 Discount Benefits

Night Discount

During Beijing Time 00:00 ~ 08:00, all model credit consumption receives an additional 20% discount (0.8x multiplier)

Auto-Renewal Discount

Continuous monthly subscription: 30% off for existing users, 23% off for new users (one-time only)

Annual Subscription

12% discount on annual subscription (cannot be combined with auto-renewal/new user discounts)

6️⃣ Community Reactions & Real User Reviews

The announcement of MiMo-V2.5 has ignited discussions across global developer communities. Active conversations are happening on Reddit, Hacker News, Chinese developer forums, and more.

🌍 Reddit Reactions

"News that Xiaomi's MiMo-V2-Pro is competing with Anthropic on benchmarks hit 118K views in just 3 days. The rapid catch-up from the open-source camp is impressive."

"310B parameters with only 15B active... This showcases the evolution of MoE architecture. Especially impressive is supporting 1M context at this price point—it's a game-changer."

"If this gets open-sourced, could we run it locally? With FP8 quantization, maybe it's possible even with 32GB VRAM. Excited to see!"

🇨🇳 Chinese Developer Communities

"Completing Peking University's compiler project in 4.3 hours sounds unbelievable. Has anyone actually tested this?"

"After testing MiMo-V2.5 hands-on, it showed excellent performance in coding logic (like Rubik's cube algorithm restoration) and writing readability. Overall, it's competitive with top-tier models."

"Since 2024, AI-driven productivity gains have been fueling economic growth. MiMo-V2.5-Pro takes this a step further as a multimodal model that understands not just text but also images, audio, and video."

📊 Real User Testing Results

In BridgeBench's AI Coding & Vibe Coding benchmark, MiMo-V2.5 achieved the following results:

| Model | Overall Score | Pass/Fail | Avg Latency | Total Cost |

|---|---|---|---|---|

| MiMo-V2.5 | 47.6 | 7 Pass / 5 Fail | 71.3s | $0.26 |

| MiMo-V2.5-Pro | 73.1 | 11 Pass / 1 Fail | 68.0s | $0.19 |

7️⃣ MiMo-V2.5 vs Competitors: Deep Comparison

Let's compare MiMo-V2.5 against major frontier models including GPT-5.4, Claude Opus 4.6, Gemini 3 Pro, and Kimi K2.6.

📊 Comprehensive Comparison Table

| Model | Parameters | Context | Multimodal | ClawEval | Price (input/output) |

|---|---|---|---|---|---|

| MiMo-V2.5 | 310B (15B active) | 1M tokens | ✅ Native | 62.3 | $0.50 / $1.50 |

| MiMo-V2.5-Pro | 310B+ | 1M tokens | ✅ Native | 75.7 | $1.00 / $3.00 |

| Claude Opus 4.6 | Undisclosed | 200K tokens | ✅ | 65.4 | $15 / $75 |

| GPT-5.4 | Undisclosed | 256K tokens | ✅ | 60.3 | $20 / $80 |

| Gemini 3.1 Pro | Undisclosed | 2M tokens | ✅ Native | 57.8 | $7 / $21 |

| Kimi K2.6 | 1T+ | 2M tokens | ✅ | 66.7 | $2 / $8 |

🏆 Strengths & Weaknesses

MiMo-V2.5 Strengths

- Overwhelming value (1/5 the cost)

- Native multimodal processing

- 1M token context window

- Planned open-source release

- High token efficiency (40-60% savings)

MiMo-V2.5 Weaknesses

- Slightly behind Claude/GPT on extremely complex reasoning tasks

- Limited language support beyond English

- Ecosystem and tooling still maturing

- Brand recognition (vs OpenAI, Anthropic)

8️⃣ Practical Guide: From Installation to API Calls

Here's a step-by-step guide to actually using MiMo-V2.5.

🔑 Step 1: Get Your API Key

- Visit the Xiaomi MiMo Platform

- Create an account and log in

- Subscribe to a Token Plan (free trial available)

- Generate your API key

💻 Step 2: Install Python SDK

pip install openai # Using OpenAI-compatible API🚀 Step 3: Make Your First API Call

from openai import OpenAI

client = OpenAI(

api_key="your-api-key",

base_url="https://api.mimo.xiaomi.com/v1"

)

response = client.chat.completions.create(

model="mimo-v2-5",

messages=[

{"role": "user", "content": "Hello! Please tell me about MiMo-V2.5."}

],

max_tokens=1000

)

print(response.choices[0].message.content)🖼️ Step 4: Multimodal Input Example

# Image analysis example

response = client.chat.completions.create(

model="mimo-v2-5",

messages=[

{

"role": "user",

"content": [

{"type": "text", "text": "What do you see in this image?"},

{

"type": "image_url",

"image_url": {

"url": "https://example.com/image.jpg"

}

}

]

}

]

)9️⃣ Xiaomi's AI Strategy & Future Outlook

The release of MiMo-V2.5 marks Xiaomi's formal entry into the major AI competition. Xiaomi plans to invest 60 billion RMB (approximately $11 billion USD) in AI over the next three years.

📈 Rapid Development Pace

- December 2025: MiMo-V2-Flash open-sourced

- March 2026: MiMo-V2 series (Pro, Omni, TTS)

- April 2026: MiMo-V2.5 series launch with planned open-source release

This rapid iteration pace is among the fastest in the industry.

🌐 Open-Source Strategy

The MiMo-V2.5 series will be released as fully open-source. Weights, tokenizers, and complete model cards will be available for download on Hugging Face.

Open-Source Model Information

| MiMo-V2.5-Base | 310B / 15B active / 256K context / FP8 |

| MiMo-V2.5 | 310B / 15B active / 1M context / FP8 |

🔮 Future Outlook

Xiaomi's MiMo team has announced they are training next-generation models with deeper reasoning capabilities, tighter tool integration, and richer real-world grounding.

🔟 Conclusion: The AI Democratization Brought by MiMo-V2.5

Xiaomi MiMo-V2.5 represents more than just a technical achievement—it opens a new chapter in AI democratization. By delivering frontier-grade performance at accessible prices, it enables organizations of all sizes, from startups to enterprises, to leverage advanced AI.

🎯 Key Takeaways

🏆 Performance

Benchmark scores comparable to GPT-5.4 and Claude Opus 4.6. ClawEval 62.3, GDPVal-AA 1578-1581.

💰 Value

1/5 the cost of competitors. 40-60% token savings for equivalent performance.

🎨 Multimodal

Native processing of text, images, audio, and video. Single model understands all modalities.

📚 Context

Up to 1 million token context window. Perfect understanding of long documents and extended video.

🤖 Agent

Automation of complex long-horizon tasks. Completed Peking University compiler project in 4.3 hours.

🔓 Open Source

Fully open-source release. Free download available on Hugging Face.

🚀 Get Started

Try MiMo-V2.5 today on the Xiaomi MiMo Platform. Start with the free trial and integrate AI into your projects.

Welcome to the new era of AI. Join us with MiMo-V2.5. 🎉